Little Fire

- About Us

- Competition

- Store

- …

- About Us

- Competition

- Store

Little Fire

- About Us

- Competition

- Store

- …

- About Us

- Competition

- Store

Empower Your Child with the Stanford Edge: Master Embodied AI & Robotics Live

Learn from Hunter, Stanford MSCS and ACM HRI 2023 Best Systems Paper Award winner. Our exclusive live sessions bring Silicon Valley’s cutting-edge Embodied AI and Space Computing labs directly to your child, transforming them from passive AI users into future innovators.

From Silicon Valley Labs to the Future: Embodied AI & Spatial Computing Workshop

Module 1: The Stanford Mindset & Embodied AI Foundations

Stanford PBL Logic: Learn the "Problem-Based Learning" methodology used in top-tier Silicon Valley labs to define and solve complex challenges.

• Why AI Needs a "Body": Introduction to Embodied AI—understanding how intelligence evolves when it interacts with the physical world.

Module 2: Spatial Computing & The Vision Pro Era

Apple Vision Pro Integration: Exploring the transition from 2D screens to 3D spatial environments.

• Gesture & Perception: Analyzing machine vision technology to create intuitive controls for robotic systems.

Module 3: Human-Robot Interaction (HRI) Masterclass

Best Systems Paper Deep Dive: Hunter deconstructs his award-winning research on how robots perceive and respond to human behavior.

• Hands-on Prototyping: Designing logic for robots to "understand" spatial commands and environmental cues.

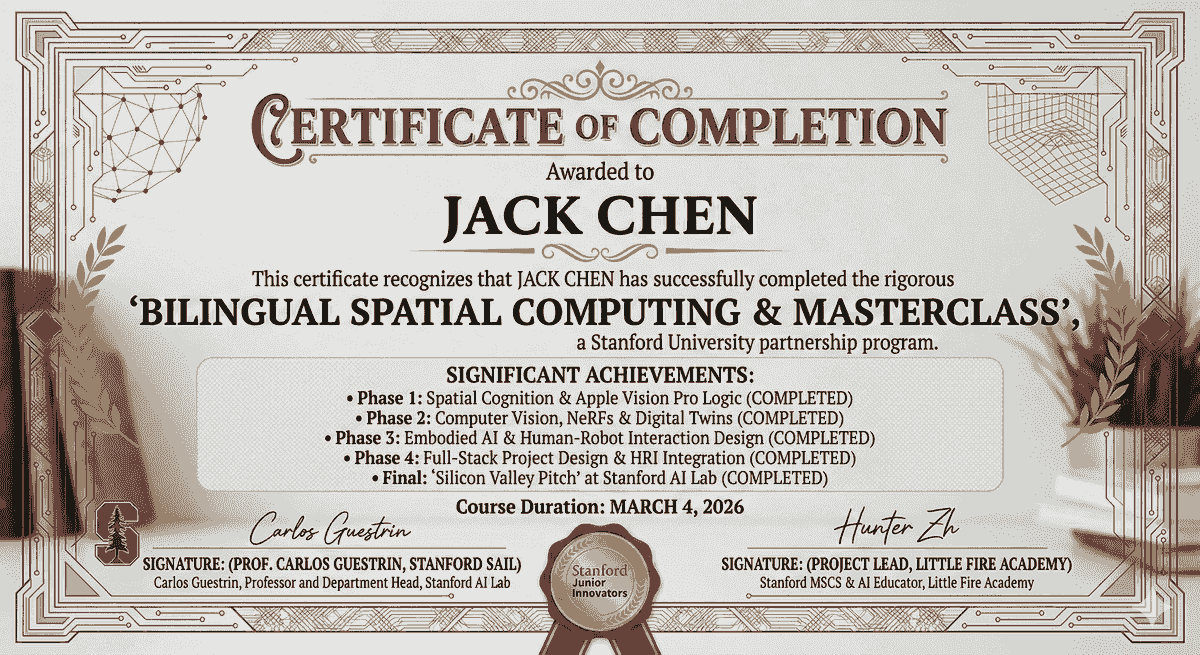

Stanford Junior Innovators

Spatial Computing & Embodied AI Masterclass

🎓 Learning Objectives

• Master Spatial Thinking: Move from 2D screens to 3D world interactions.

• Embodied AI Mastery: Understand why AI needs a "body" to interact with the physical world.

• Stanford PBL Logic: Apply the "Empathy-to-Prototype" framework to solve real-world problems.

Phase 1: Spatial Cognition & AR Foundations

Session 1: Hello, Digital Twin! — Opening the Door to Spatial Computing

• Scientific Principle: Understanding the leap from 2D (screens) to 3D (space). Defining "Spatial Computing."

• Hands-on Demo: Using an iPad/iPhone to scan a physical object (like an apple) and generate its digital model in mid-air.

• Stanford Mindset: "Observation Training"—Analyzing how light, shadows, and reflections affect objects in the physical world and simulating them in AR.

Session 2: The Magic of Gestures — Mastering Vision Pro Interaction Logic

Interaction Tech: Learning Apple's core interaction language (Tap, Pinch, Drag).

Toolbox: Using Reality Composer to set up "Tap Triggers"—making a virtual robot dance when you click a floating button.

Logic Training: Understanding the relationship between "Triggers" and "Actions" to build fundamental programming reflexes.

— Alice Johnson

Phase 2: Giving AI a "Body" (Embodied AI)

Session 3: Robots "Seeing" the World — Introduction to Computer Vision

• AI Core: Explaining how robots recognize objects via cameras and LiDAR.

• Practical Exercise: Setting up "Plane Detection" in AR to help the AI understand the difference between the "floor" and a "tabletop."

• Embodied AI Concept: Understanding that AI can only perform meaningful actions once it perceives its physical environment.

Session 4: Command Line Heroes — Programming Your First Robot Logic

Coding in Action: Using Swift Playgrounds (Apple’s official coding tool).

• Mission: Guiding a robot named "Byte" through a 3D obstacle course using precise code commands.

• Computational Thinking: Learning how "Loops" and "Functions" are used in real-world robotics control.

Phase 3: Human-Robot Interaction & Creative Design

Session 5: The "Emotions" of Robots — Designing Human-Robot Interfaces (HRI)

• Design Aesthetics: Learning Stanford D.School’s "Empathy-Driven Design." (e.g., What color should a robot’s eyes be to appear friendly?)

• Hands-on: Adding spatial audio and dialogue bubbles to your robot in Reality Composer.

• HRI Basics: Learning how to make robotic feedback feel more human to improve the user experience.

Session 6: Spatial Puzzles — Creating Your First AR Maze

- Integrated Project: Using the room's physical floor to layout a complex digital maze.

- Tech Point: Mastering "Physics & Collision Detection."

- Challenge: Programming the AI robot to autonomously find the fastest path out of the maze.

Phase 4: Future Vision & Project Showcase

Session 7: The Smart Agent — How AI Assistants Change Our Lives

• Tech Trends: Analyzing the logic behind "AI Agents" (like the popular OpenClaw) and how they automate tasks.

• Imagination Spark: If your robot "Twin" could do your homework or clean your room, what new skills would it need to learn?

• Career Outlook: Exploring 2026’s hottest roles: "Prompt Engineers" and "Spatial Designers."

Session 8: The Stanford Pitch — Presenting Your Spatial Robot Proposal

Presentation Skills: Learning the art of the "Elevator Pitch" for tech projects.

Portfolio Review: Organizing the AR works created in the previous sessions into a professional demo video.

Grand Finale Prep: Preparing questions for the Live Session with Hunter Zhang to secure personalized expert feedback.

Expert Live Session: Inside the

Silicon Valley Lab

Theme: From Stanford to the Future – Embodied AI with a Stanford Mentor

• Offline Location: Little Fire Mountain View Office (599 Fairchild Drive, Mountain View, CA)

• Online Access: Global Live Stream (Unlimited Regions)

• Participants: 2-6 Offline Students | 10+ Online Students | Ages 8-15Add subtitle

100-30 min: Live Lab Demo (The Dual-Perspective Experience)

The Experience: Hunter broadcasts live from the Little Fire Mountain View Office, demonstrating the latest in Embodied AI.

• Offline Students: Stand right next to the hardware, observing LiDAR sensors and robotic actuators up close.

• Online Students: Watch via a high-definition dual-camera setup (Main View + Robot’s POV).

• Interactive Demo: Demonstrating "Spatial Commands" by navigating a robot through physical obstacles set up by the offline students on-site.

230-60 min: Collaborative "Cloud-to-Physical" Mission

The Mission: Co-training an "AR Navigation Assistant."

• Online Students: Design AR waypoints on their screens and send logic parameters (speed, rotation, avoidance) to the lab.

• Offline Students: Act as "Field Engineers," placing physical barriers on the office floor to test if the online students' logic can successfully guide the robot.

• Learning Goal: Understanding how digital code crosses borders to control physical entities in a Silicon Valley lab.

360-90 min: Stanford Mindset & Roadmap

Silicon Valley Insights: Hunter shares his journey researching Embodied AI at Stanford and explains why Mountain View is the global heart of AI (neighbors with Google and Waymo).

• Academic Roadmap: Personalized advice for students aged 8-15 on building a competitive profile for top-tier universities and future tech competitions.

• Global Q&A: A round-robin session where offline and online students discuss the 2026 AI landscape and get expert feedback on their course portfolios.

Little Fire Corp